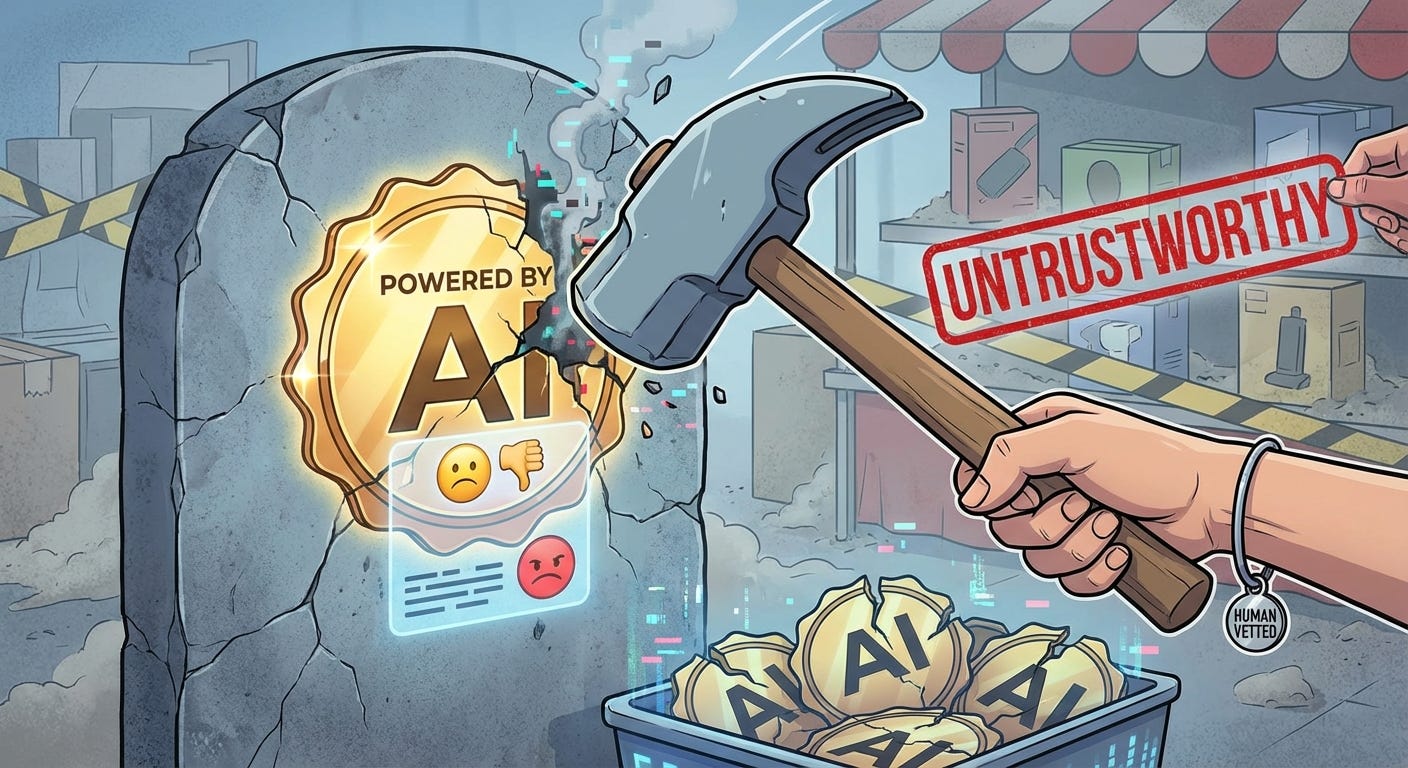

AI Killed "Powered by AI"

The label that was supposed to sell your product is now the reason users don't trust it

There’s a moment every product person dreads now.

A user lands on your app, sees “AI-powered” somewhere in the UI — a badge, a headline, a little sparkle icon — and their shoulders drop. Not excitement. Not curiosity. Just a quiet, involuntary oh. Like they just read “some assembly required” on a Christmas morning box.

That shift happened fast. Eighteen months ago, “powered by AI” was a conversion driver. Investors wanted it in your deck. Users wanted it in your product. It meant smart, modern, ahead of the curve.

Today it means: this might not work, and when it doesn’t, it’ll fail in a weird way I can’t predict.

You’re building something real, something that actually works, and you’re fighting a reputation you didn’t create. That’s the situation. And ignoring it doesn’t make it go away.

How We Got Here

The industry had a collective delusion in 2023. The delusion was this: if it sounds confident, it is confident. Ship fast, label everything AI, watch the signups roll in.

And for a while, it worked. Curiosity is a powerful acquisition channel. People signed up to try things. Products got traction. Valuations went up.

But users actually used these products. And what they found was a specific, consistent flavor of failure that they’d never experienced with software before. Not “this is broken” broken. More like “this answered the wrong question, beautifully.” Summaries that missed the point. Chatbots that apologized in complete sentences while solving nothing. Suggestions that were plausible, confident, and completely wrong.

The failure mode wasn’t incompetence. It was overconfident incompetence with perfect grammar. That’s somehow worse. A 404 error you understand. A well-written wrong answer you have to fact-check manually — that costs you time and erodes trust simultaneously.

So users built a heuristic. Burned enough times, the brain does what brains do: it generalizes. AI label equals lower your expectations. It’s not fair to every product. It doesn’t matter that it’s not fair.

The Label Is Now Load-Bearing in the Wrong Direction

Here’s the part nobody’s writing about: the most serious AI builders are quietly removing “AI” from their UI copy.

Not because they’re ashamed of the tech. Because they’ve done the research, run the tests, seen the session recordings — and “AI” in the label is actively hurting conversion and trust. Users who don’t see the label engage more naturally. They just use the feature. They don’t come in with the pre-loaded skepticism that makes them second-guess every output.

That’s a strange position to be in. You have a genuinely good product, built on technology that took billions of dollars and thousands of engineers to create, and your best UX move is to not mention it.

But this is where we are. The label is radioactive for a segment of users — and that segment is growing, not shrinking. Every bad AI product that ships makes the problem worse for everyone else. It’s a tragedy of the commons running in real time.

What Users Actually Lost Trust In

It’s worth being precise here, because “users don’t trust AI” is too blunt to be useful.

What users lost trust in specifically is AI as an autonomous decision-maker in contexts that matter. They’ve internalized — correctly — that LLM outputs need verification. They don’t know why, they couldn’t explain attention mechanisms or hallucination dynamics, but they know from experience that the thing sounds right more reliably than it is right.

So when they see “AI” on a feature that’s making a recommendation, drafting something on their behalf, or summarizing information they’ll act on — they hesitate. Because acting on a plausible-but-wrong AI output has a cost. Sometimes a real cost.

What they haven’t lost trust in is AI as a tool they control. Autocomplete in an IDE, grammar suggestions in a writing app, search that understands intent — these AI features have high adoption because the user stays in the loop. They’re augmented, not replaced. The label barely registers because the feature behavior earns trust on its own.

The distinction is autonomy. Users are fine with AI doing things with them. They’re skeptical of AI doing things for them. And a lot of what got shipped in the past two years was the latter, dressed up as the former.

The “Sparkle Icon” Problem

There is an entire visual language of AI that’s become its own warning system.

The sparkle ✨. The gradient shimmer. The “generating...” skeleton screen that pulses for four seconds before producing something usable 60% of the time. These patterns are now so universally associated with “this might disappoint you” that they’ve become negative affordances.

You trained your users to lower their expectations before they even see the output. Every time a shimmer loaded and gave them garbage, they filed it. Now the shimmer itself triggers the “brace for disappointment” response. Pavlov would find this fascinating.

This is a UX debt the industry created collectively. Individual products are paying for it even when their outputs are genuinely good.

The fix isn’t cosmetic. Changing your shimmer to a different animation doesn’t solve it. What solves it is competence at the moment of truth — consistently, enough times that the user builds a new association. That takes longer than shipping a redesign. There’s no shortcut.

How You Actually Earn the Label Back

The path forward isn’t to hide AI or rebrand it. It’s to earn the label through behavior before you claim it through copy.

Show, don’t label. If your AI feature is good, it’ll demonstrate that in the first interaction. Lead with the output, not the badge. Let users feel the value before you tell them what powered it. Once they trust the feature, they don’t care what’s under the hood — and if they find out it’s AI, that becomes a positive discovery instead of a raised eyebrow.

Design for the wrong answer. Every AI feature fails sometimes. The question is what happens when it does. If your fallback is a confusing half-answer with nowhere to go, users lose trust faster than if you’d shown nothing. Build the error state, the low-confidence state, the “I’m not sure” state with the same care you build the success state. An AI product that handles failure gracefully is more trustworthy than one that handles success impressively.

Be honest about what it is and isn’t doing. “AI-generated, please verify” is not a weakness. It’s a trust signal. It tells users you know the limitations, you’re not pretending otherwise, and you respect their time enough to not let them get burned. That’s the opposite of what the bad actors did. Lean into it.

Earn the label incrementally. Don’t launch with “AI-powered everything.” Launch with one feature that’s genuinely excellent, let users discover it works, then expand. Trust compounds. So does skepticism.

The Real Problem

The products that burned users didn’t just set back their own trust scores. They set back the category. Every developer building a serious AI feature today is fighting a deficit they didn’t create.

That’s genuinely unfair. It’s also the reality of building in a market where “AI” got used as a marketing adjective before it was earned as a product descriptor.

The label will recover. It always does — “mobile” went through the same cycle, “cloud” did too. Technologies get overhyped, they fail publicly, expectations recalibrate, and then the products that actually work quietly become indispensable.

We’re in the recalibration phase. The builders who get through it are the ones who stop trying to borrow credibility from a label and start building enough credibility that the label borrows from them.

Powered by AI should mean something again. Make your product mean it first.